Machine Learning

-

A mean effect can hide who should and should not receive an edit. That fail-closed boundary is part of the product, not a footnote.

-

A hospital switches on a sepsis alert and mortality moves. That does not mean the alert caused the change. The hard part is separating who gets flagged from what the flag changes.

-

Prediction can describe where a molecule sits. Causal reasoning helps decide which lever is worth trying next, what it may improve, and when the evidence is too weak to act.

-

A clean A/B test is already a causal design. The trouble starts when someone conditions on post-treatment behavior, slices the experiment the wrong way, or forgets what randomization actually bought them.

-

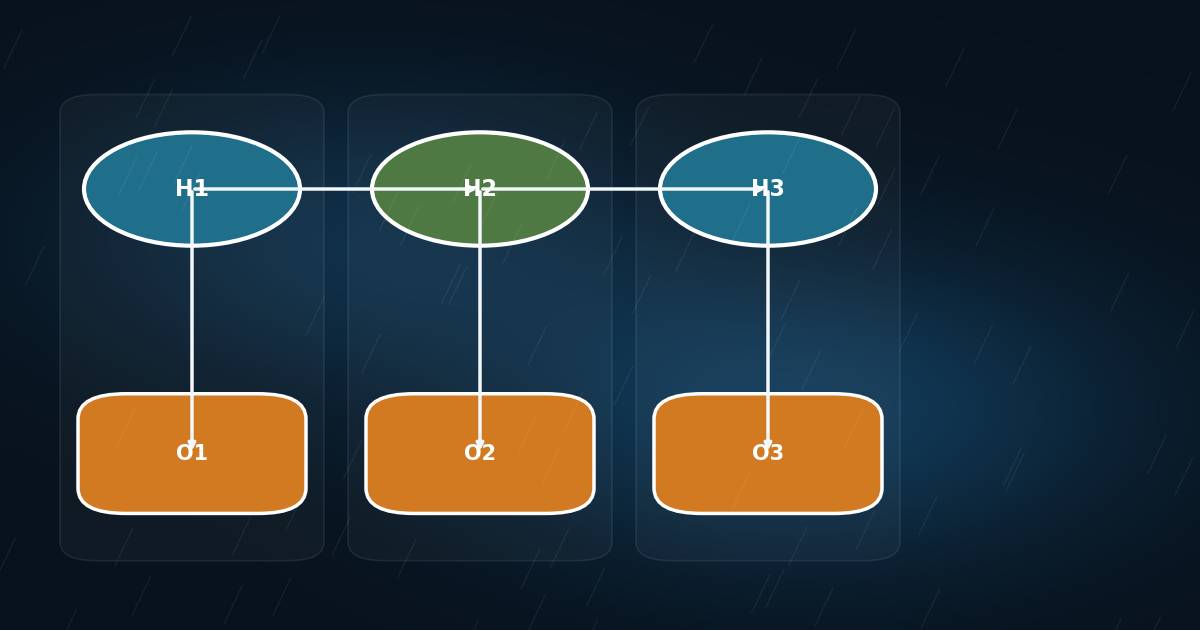

Once you unroll time, an HMM is just an ordinary Bayesian network with repeated structure. That makes temporal reasoning much less mysterious and much more programmable.

-

Counterfactuals are where causal APIs stop describing populations and start answering the harder question: what would have happened for this exact case?

-

If observing X and setting X collapse to the same operation in your tooling, confounding has already beaten you.

-

A treatment can look worse in the aggregate and better in every subgroup. That is not a corner case. It is the reason your query surface needs association, adjustment, and intervention.

-

Lead optimization does not stall because teams lack scores. It stalls because scores do not answer what analog to make next under competing objectives.

-

In this post, we show that is possible to apply causal learning and inference to massive and high-dimensional data. We took to causal modeling of 207 molecular properties (decriptors from RDKit) over 41,990 drug molecules from ChEMBL targeting breast cancer (MCF-7).