Causal Inference

-

When we pooled seven related anxiety-drug starting points into one workflow, the story stopped sounding like one lucky case study and started sounding like a reusable decision framework.

-

One of our internal lorazepam-centered workflows gets blunt here: if a program insists on staying too close to the seed, it can systematically avoid the better region.

-

A mean effect can hide who should and should not receive an edit. That fail-closed boundary is part of the product, not a footnote.

-

A recommendation gets stronger when it shows up as a populated region of chemistry, not a lone theoretical win. That happened in one of our lorazepam-centered internal workflows.

-

In one of our internal lorazepam-centered workflows, the most useful result was not one magic winner. It was a coordinated direction of change that improved safety without breaking the rest of the objective bundle.

-

Prediction can describe where a molecule sits. Causal reasoning helps decide which lever is worth trying next, what it may improve, and when the evidence is too weak to act.

-

A clean A/B test is already a causal design. The trouble starts when someone conditions on post-treatment behavior, slices the experiment the wrong way, or forgets what randomization actually bought them.

-

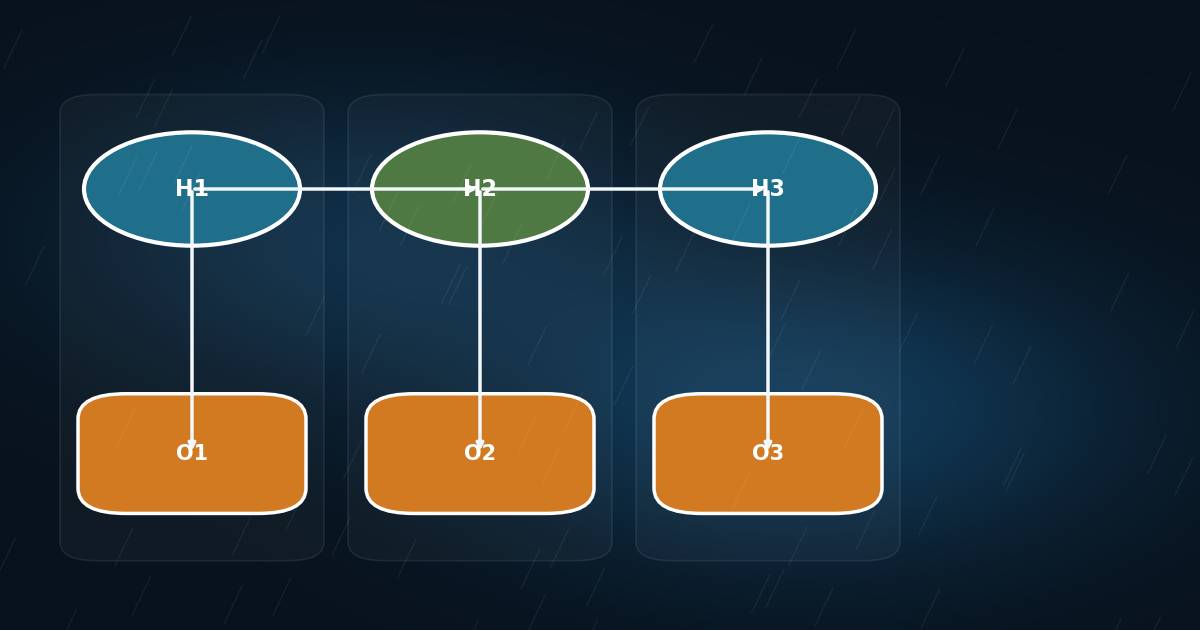

Once you unroll time, an HMM is just an ordinary Bayesian network with repeated structure. That makes temporal reasoning much less mysterious and much more programmable.

-

Counterfactuals are where causal APIs stop describing populations and start answering the harder question: what would have happened for this exact case?

-

If observing X and setting X collapse to the same operation in your tooling, confounding has already beaten you.